You just ran a workshop. People clapped at the end, a few said “great session” on their way out, and now you’re left wondering what actually landed and what fell flat. So you send a feedback form with five “rate 1-5” questions and a “any other comments?” box at the bottom.

Two weeks later, you have 11 responses out of 40 attendees. Most of them rated everything a 4. The comments section is either blank or says “good workshop.” You’ve learned nothing.

This is how most post-workshop feedback works, and it’s a waste of everyone’s time. The problem isn’t that people don’t want to give feedback. It’s that generic workshop feedback forms ask the wrong questions in the wrong way at the wrong time.

Here’s how to build one that actually tells you something useful.

Why most workshop evaluation forms fail

The typical training feedback form has two fatal problems.

First, it asks about satisfaction instead of outcomes. “How satisfied were you with today’s workshop?” is a politeness test, not a feedback question. Most people will give you a 4 out of 5 because they don’t want to be rude, and because they can’t articulate what was wrong in a number. You end up with a score that feels good but tells you nothing about what to change.

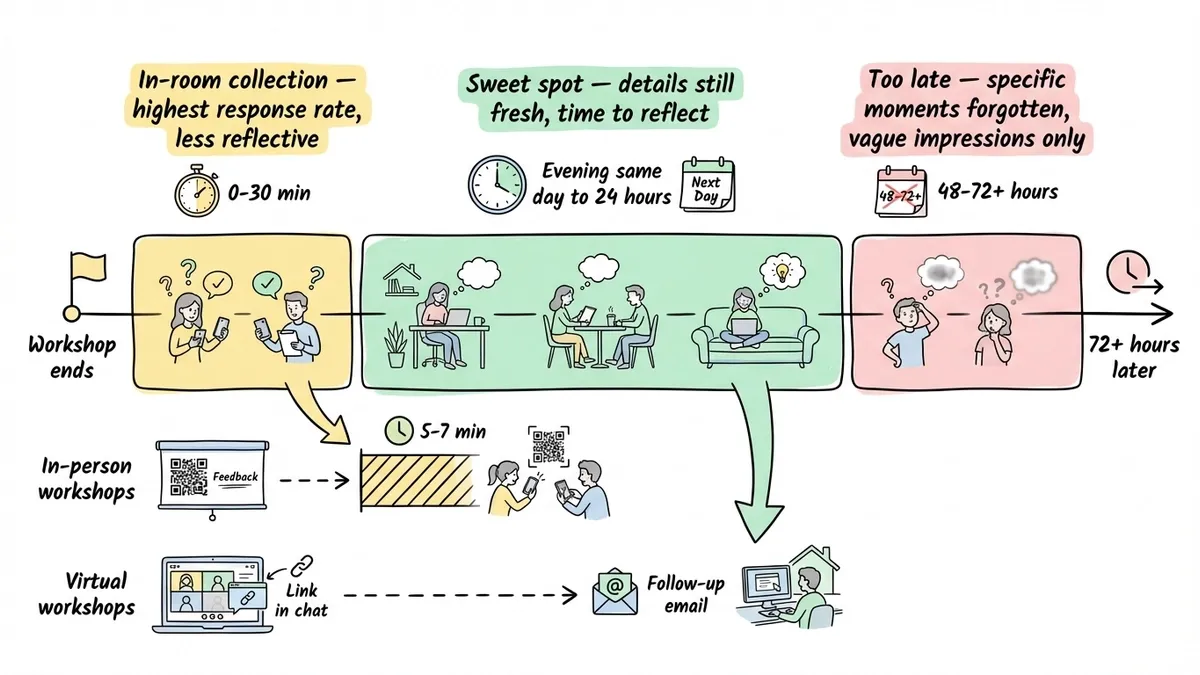

Second, it arrives too late. If you send your post-workshop survey three days after the event, attendees have already forgotten the specific moments that confused them or the exercise that clicked. You get vague impressions instead of concrete observations.

The fix isn’t complicated. You need better questions, better timing, and a form that’s quick enough that people actually finish it.

What to ask on your workshop feedback form

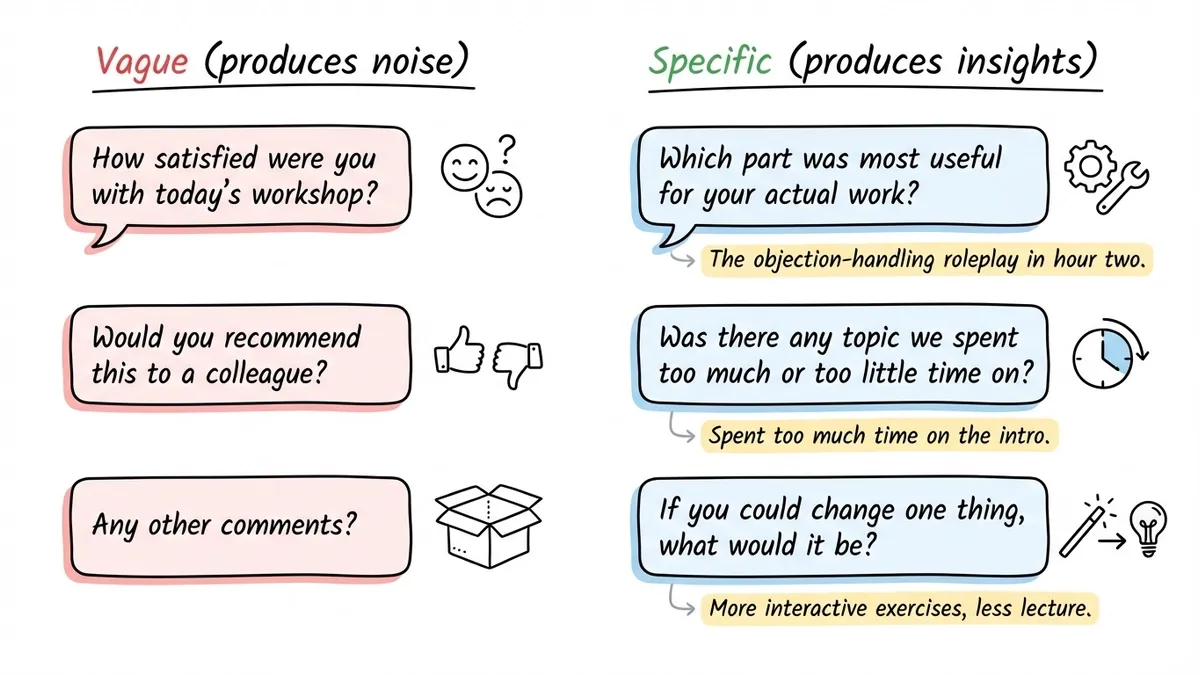

Not all feedback questions are created equal. Some produce data you can act on. Others produce noise that looks like data. Here’s how to tell the difference.

Questions that are mostly worthless

“How would you rate the workshop overall?” gives you a number with no context. If 30 people rate it a 3.8, what do you do with that? You don’t know if the content was weak, the pacing was off, the room was too cold, or the exercises felt pointless. A single overall rating is the feedback equivalent of someone saying “it was fine.”

“Would you recommend this workshop to a colleague?” is borrowed from NPS surveys, where it makes sense for ongoing customer relationships. For a one-time workshop, it’s too abstract. People don’t know if they’d recommend it because they haven’t tried applying what they learned yet.

“Any other comments?” at the end of a form is where feedback goes to die. By the time someone reaches this question, they’ve already spent their mental energy on the rating scales. An empty text box with no direction produces either nothing or “thanks for the workshop.”

Questions that actually produce useful feedback

The best workshop feedback questions target specific, changeable aspects of the experience. Here’s what I’d include:

Content relevance: “Which part of the workshop was most useful for your actual work?” This forces people to think about application, not just enjoyment. If everyone names the same section, you know what’s working. If nobody mentions a section you spent 45 minutes on, that’s a clear signal.

Pacing and depth: “Was there any topic we spent too much or too little time on?” This is more useful than a pacing rating scale because it tells you exactly where to adjust. A Likert scale saying pacing was “somewhat too fast” doesn’t help you decide which section to slow down.

Skill confidence: “How confident do you feel applying [specific skill] after today’s session?” Use a scale here (1-5 works fine), but tie it to a concrete skill, not the workshop as a whole. If you taught three techniques, ask about each one separately.

The one-change question: “If you could change one thing about this workshop, what would it be?” This is the single most valuable question on any seminar feedback form. It’s specific enough to produce actionable answers but open enough to catch things you didn’t think to ask about. People who wouldn’t write a paragraph in “any other comments” will give you a sentence here because the constraint makes it easier.

Missing content: “Was there anything you expected to learn that we didn’t cover?” This catches gaps between your marketing or description and the actual content. It also surfaces topics for future workshops.

How many questions to include

Aim for 8-12 questions. Fewer than that and you’re probably not covering enough ground. More than that and completion rates drop fast, especially if people are filling out the form on their phones during a break.

If you’re running a half-day or full-day workshop, you can push toward 12. For a 60-90 minute session, keep it under 8. The length of the form should feel proportional to the time someone invested in attending.

Likert scales vs. open-ended questions: when to use each

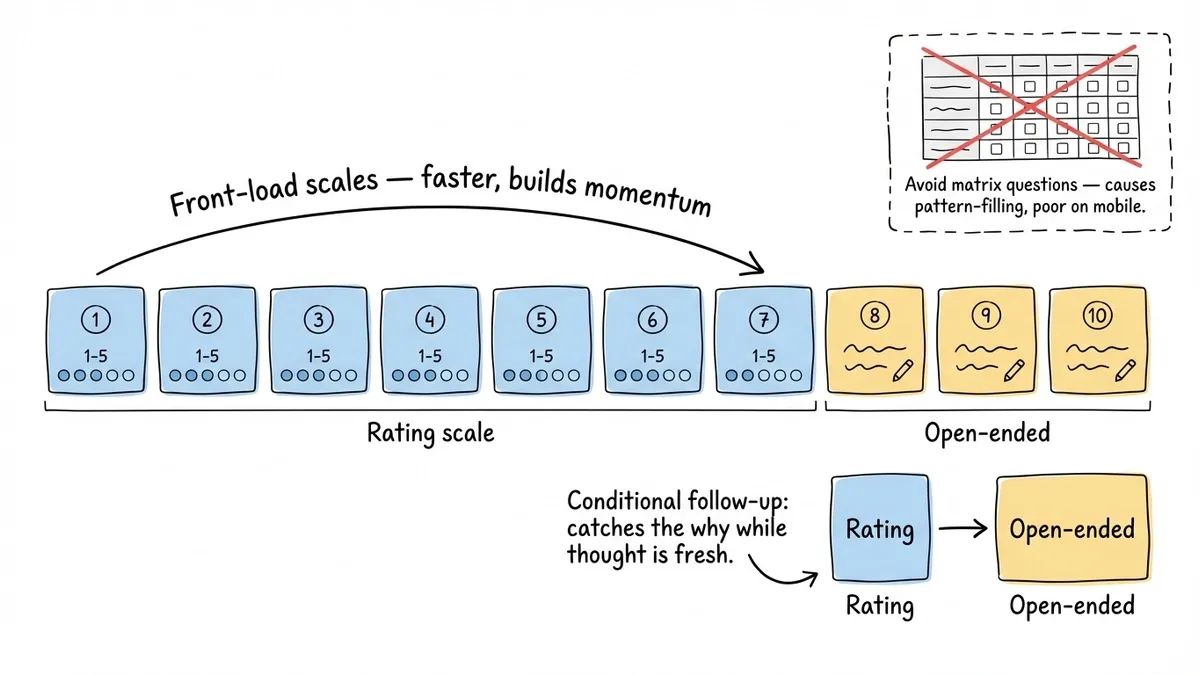

This is where a lot of workshop organizers get it wrong. They either go all rating scales (easy to analyze, hard to learn from) or all open-ended (rich data, painful to analyze, low completion rates).

Use Likert scales for things you want to track over time

If you run the same workshop repeatedly, rating scales let you compare across sessions. “How confident do you feel applying this skill?” on a 1-5 scale gives you a number you can track from March to June to September. Did the new exercise you added in June move the needle?

Keep your scales consistent. Don’t use 1-5 for one question and 1-10 for another. Label both endpoints clearly (“1 = Not at all confident” and “5 = Very confident”). Skip the neutral midpoint if you can. A 4-point scale forces people to lean one direction, which gives you more useful data than a pile of 3s.

Use open-ended questions for things you want to understand

Rating scales tell you what’s working or not. Open-ended questions tell you why. You need both, but the ratio matters.

For a 10-question form, I’d use 6-7 rating scales and 3-4 open-ended questions. Front-load the scales (they’re faster to answer and build momentum) and scatter the open-ended questions throughout rather than dumping them all at the end.

One trick that works well: follow a rating scale with a conditional open-ended question. “How useful was the group exercise?” (1-5 scale), then “What would have made it more useful?” People who just rated it a 2 have something specific in mind, and the open-ended question catches it while the thought is fresh.

Skip matrix questions

Those grid-style questions where you rate five things on the same scale in a table format? They look efficient, but they produce terrible data. People start pattern-filling (4, 4, 4, 3, 4) instead of thinking about each item. They’re also miserable to fill out on mobile. Use individual questions instead.

Timing: when to send your workshop feedback form

Send it within 24 hours. That’s the window. Here’s why.

Right after the workshop ends, people are tired and want to leave. They’ll rush through a form handed to them at the door. But by the next morning, they’ve had time to sleep on it and process what they learned, while the details are still fresh enough to give specific feedback.

The best approach I’ve seen: mention during the workshop that you’ll send a feedback form, explain briefly why their input matters (“I use this to redesign the next session”), and then email the link that evening or the next morning.

If you’re running an in-person event and want to collect feedback before people leave, build the form completion into the schedule. Block 5-7 minutes at the end, put the link or QR code on screen, and let people fill it out in the room. This gets you the highest response rates, but the feedback tends to be less reflective than what you’d get the next day.

For multi-day workshops or training programs, send a short check-in form at the end of each day (3-4 questions) and a comprehensive event feedback form after the final session. The daily check-ins let you adjust on the fly, and the final form captures the overall experience. We cover a similar approach for ongoing programs in our guide on creating course evaluation forms.

Building the form: practical setup

You don’t need anything fancy. A form builder with rating scales, text fields, and multi-page support covers everything.

Structure it as a multi-page form

Don’t show all 10 questions on one long page. Break them into 2-3 pages grouped by topic: content questions on page one, delivery and format on page two, open-ended and suggestions on page three.

Multi-page forms feel shorter even when they have the same number of questions. People see “page 1 of 3” and think “I can do three pages.” Show them 10 questions stacked vertically and they think “this is going to take forever.” We’ve written more about this in our post on what multi-step forms are and why they work.

In Fomr, you can set up multi-page forms with a progress indicator so respondents always know where they are. The auto-jump mode works well for feedback forms because it moves people through questions one at a time, keeping them focused.

Make it look like it belongs to you

A feedback form that looks like a default template signals that you didn’t put much thought into it. Match the form’s colors and fonts to your workshop branding or your organization’s visual identity. This is a small thing, but it affects how seriously people take the form.

Share it the right way

For in-person workshops, a QR code on the final slide is the fastest path. People scan it with their phone and start filling out the form immediately.

For virtual workshops, drop the link in the chat during the last five minutes and in the follow-up email. Include a direct link, not a “click here to provide feedback” button buried in a paragraph of text.

If you want to collect feedback without requiring attendees to create accounts or jump through hoops, Fomr’s guest editor lets you build and share forms without any signup friction on either end.

Acting on the feedback (the part most people skip)

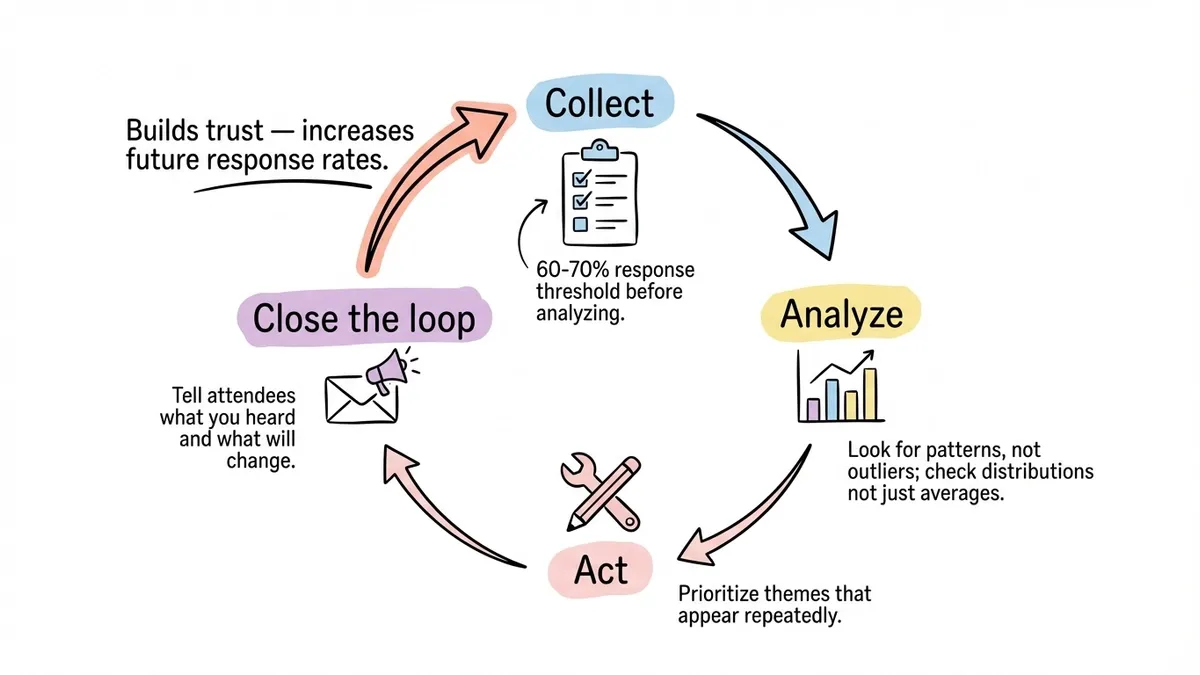

Collecting feedback is the easy part. The hard part is doing something with it. Here’s a simple process that works.

Read everything before you react

Don’t start making changes after reading the first three responses. Wait until you have at least 60-70% of your expected responses, then read through all of them before drawing conclusions. Early responses tend to skew toward people with strong opinions (positive or negative), and they’re not always representative.

Look for patterns, not outliers

One person saying “the exercises were too easy” is an opinion. Eight people saying it is a pattern. Focus your energy on the themes that show up repeatedly. If you’re using rating scales, look at the distribution, not just the average. A question with an average of 3.5 could mean everyone thought it was okay, or it could mean half the room loved it and half hated it. Those are very different situations.

Close the loop

This is the step that separates good workshop organizers from great ones. Send a brief follow-up to attendees summarizing what you heard and what you plan to change. “Several of you mentioned the afternoon session felt rushed, so next time we’re extending it by 30 minutes and cutting the intro section shorter.”

People who see their feedback acted on become repeat attendees and enthusiastic referrers. People who feel like their feedback disappeared into a void stop bothering to fill out your forms. This same principle applies to any feedback collection, whether it’s customer feedback or satisfaction surveys.

A sample question set to start with

Here’s a workshop feedback form structure you can adapt. It’s designed for a 2-4 hour workshop, but you can trim it for shorter sessions.

- “How relevant was the workshop content to your current role?” (1-5 scale)

- “Which section or activity was most valuable to you?” (open-ended)

- “Was there any topic we spent too much time on?” (open-ended)

- “How confident do you feel applying [primary skill] after this session?” (1-5 scale)

- “How would you rate the pacing of the workshop?” (Too slow / About right / Too fast)

- “Were the hands-on exercises helpful for understanding the material?” (1-5 scale)

- “What’s one thing you’d change about this workshop?” (open-ended)

- “Was there anything you expected to learn that we didn’t cover?” (open-ended)

- “How likely are you to attend another workshop from us?” (1-5 scale)

- “Anything else you’d like us to know?” (optional, open-ended)

That’s 5 rating questions and 5 open-ended questions. The open-ended ones are specific enough to produce useful answers, and the rating questions give you trackable metrics. You can drop questions 8 and 10 for shorter workshops.

Start collecting better workshop feedback

The difference between a workshop that improves over time and one that stays the same is usually just a well-designed feedback form and the discipline to act on what it tells you. You don’t need a complex survey platform or a statistics degree. You need the right questions, sent at the right time, in a form that respects people’s time.

Build your workshop feedback form for free and start collecting responses that actually help you improve your next session.